Why Marketers Should (Almost) Always Use Control and Test Groups

Published on October 13, 2016/Last edited on October 13, 2016/5 min read

Team Braze

Long gone may be your science-fair days of creating experiments with a hypothesis and testing the effects of an independent variable on a dependent variable, compared to the results of a control group.

Except, day in and day out, that’s what marketing is all about.

The most exciting part of marketing experiments? Trying something totally new as a company, like launching a new messaging channel, a new look and feel, or a new style and tone, which can be just as scary as it can be exhilarating. To take on the task, it’s a good idea to have a control group of users who don’t receive these new campaigns, so you can compare key results between the group that receives the new campaigns and the group that sees only your regular marketing communications.

What should you be looking for? When you set up test groups and are comparing them to your control groups, be on the lookout for differences in the specific conversion you’re tracking for the campaign, as well as down-the-line retention. This will help you understand what’s working and what’s not, and allow you to be thorough with reporting on your progress and implementing next steps.

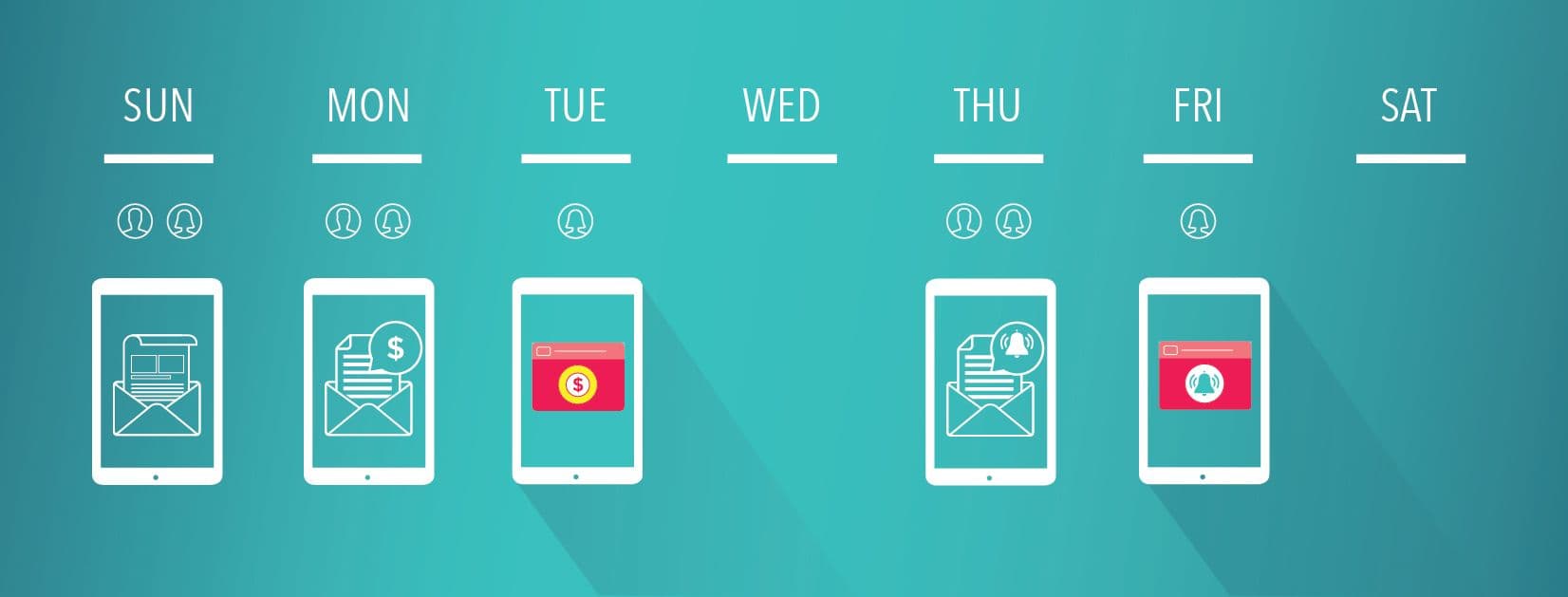

Example of a test in which a control group does not receive push notifications.

Why a control group is a good idea

Using control groups sheds light on marketing results in two unique and helpful ways: providing insights and context about how your campaigns and campaign variants are affecting your users’ behaviors, if at all; and giving you intel that can help limit over-messaging, particularly when it comes to cutting back on campaigns that may not really be pushing the needle upon closer inspection. This is particularly important because your customers are likely being inundated with marketing messages across channels from a variety of competing marketers.

Picture this scenario: You send out a campaign (without a control group) and notice high conversion rates resulting from the campaign. Your initial reaction might be to conclude that some aspect of that campaign, be it the message copy, image, delivery, or cadence, is driving this KPI increase. Now imagine you send the same campaign with a control group: This time you might see that the control group and the group that sees the campaign have similar conversion rates. In this scenario, the takeaway is that users are behaving the same way regardless of being sent the new campaign or not.

How to segment appropriately

Your segment should always be large enough to indicate statistical significance and to be representative of the audience you’re trying to get insights about. If you run a test with a small group, you might find that 100% of a segment of users behaves in a way that supports your hypothesis, but if that segment is only made up of 10 users, these likely aren’t results you can trust.

- Make sure your audience segmentation targets the same characteristics, for the control and the variant, so you’re comparing two groups that are actually comparable. (For example, don’t have your control group be all men in their 30s, and your test be all women in their 50s.)

- Focus on one variable that you want to test. If you design your test campaign with too many variants, the data you’ll get from comparing the test to the control will be less actionable and less reliable.

- Give your test plenty of time to run, ideally at least a week, to control for variations caused by different days of the week. In general, a longer test period better controls for external factors, like day of week, seasons, and holidays. Additionally, the longer a test runs, the more likely you’ll be to produce statistically significant results.

- If generating a conversion for your category of app usually takes longer (like a grocery shopping app, where users tend to update their meals once a week), then running the test for a longer period of time makes sense.

Signs further testing is needed

All tests are likely to yield some uncertainty, but here are some indicators you may need additional testing:

- When you notice a significant difference between your test and control groups, instead of automatically attributing that difference in behavior to your campaign, first do a sanity check to make sure the results are contextually relevant. There’s always a chance that external factors are affecting your test; a good practice is to first have a hypothesis, which you’ve formulated based on logical guesses around how your campaign will perform.

- If the results for the control group and variant are similar, you may need to go back to the drawing board.

- If the control group has lower conversion rates but leads to higher retention rates, or other differing changes in important KPIs, you might need to adjust your variants to produce higher conversions and higher retention.

When a control group may be unnecessary

Chances are, if you’re changing up your strategy, you should be using a control group to guide you in the process. Even a select portion, say as low as 5-10% of your overall audience, is worth having to confirm your baseline assumption: that your campaign/message will drive a different behavior from the users who receive it compared to users who do not. That being said, if you absolutely want every audience member to have the chance of seeing the same exact campaign or marketing change, that could be a reason not to use a control group. However, you do always have the option of sending the marketing message to the control group once your experiment is complete.

In really urgent and timely cases, such as breaking news or a call to action for all users to update your app, the need for all users to see the notification may be more important than finding out how a test group would react.

Ready to take testing to the next level?

Read our guide that explores A/B vs. Multivariate Testing: When to Use Each.

Be Absolutely Engaging.™

Sign up for regular updates from Braze.