3 Marketing Experiments That (Actually) Paid Off

Published on December 16, 2015/Last edited on December 16, 2015/7 min read

Team Braze

Uncertainty is the norm in marketing. Whether you’re working at a large company or small startup, there will always come a time when you feel like you’re hedging your bets with an idea or campaign.

In these situations, you will likely have several approaches that you can take to answering your questions: you could conduct extensive market research, dig through secondary research studies, pay someone for consulting, or move forward with taking a risk.

Or, you could run a quick marketing experiment. That’s what the three businesses here did, from startups MightyHandle and OkMyOutfit to international company Molson Coors.

Take the scientific method as a guide: identify a business question that you want to answer or challenge that you want to solve, outline a hypothesis, design an experiment, evaluate the results, course-correct on any weaknesses, and build upon the successes.

Read on to learn how these three marketing experiments resulted in valuable insights.

1) MightyHandle’s quest for the right packaging concept

The challenge

In 2014, CPG startup MightyHandle—a company that creates handles for carrying heavy bags— faced the biggest opportunity of its startup life: the company’s marketing advisor, Anita Newton, had lined up an in-store test within Walmarts nationwide. If the experiment were successful, MightyHandle would begin appearing on store shelves in 2015. There was a lot at stake for the startup, which was still in its infancy.

The hypothesis

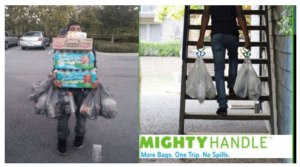

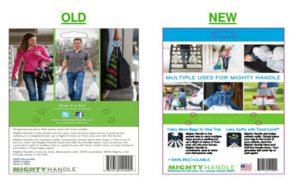

Newton suspected that MightyHandle would need a new packaging concept; the demographic of Walmart’s shoppers (suburban moms) was different from its existing customers (twenty-something city dwellers). Based on past data from Amazon reviews and customer polls, she came up with a list of concepts to test (check out the image below).

The experiment

What Newton didn’t have, however, was access to a large branding budget. Extensive market research projects were out of the question, and with the Walmart test just weeks away, the startup didn’t have much time for improvising.

Newton realized something: on channels like Facebook, she had the ability to target the exact audience that she wanted to reach. So, she launched a marketing campaign to test different creative concepts: images, taglines, and combinations of both that presented her target demographic with different uses for the handle. For a few hundred dollars, she tested out these concepts until she identified the winning images and messages (see the comparison below), which clearly showed which of the handle’s uses her new target demographic of suburban moms valued the most. She then validated that data by running a complementary campaign to the same audience segment on a different ad network. The winning creative concept won again.

The outcome

Newton decided to use these advertising concepts for MightyHandle’s Walmart test. The product performed so well on shelves that the two companies decided to extend their relationship: MightyHandle is now present in thousands of Walmart locations.

The takeaway

Before chasing a big idea or initiating a large marketing spend, test your idea in a lower-risk campaign. Once you have a working model set up, and statistically significant data to back up your approach, you can increase your marketing spend or jump into a larger market.

2) Molson Coors’ creative weather targeting campaigns

The challenge

It’s well-known among CPG companies that buyers make in-the-moment decisions: you need to reach them with the right messages at the right time. But the CPG world also experiences some separation from its customer base since there is often a retailer or ‘middle man’ facilitating everyday transactions. Molson Coors wanted to see if there was a way to run ‘trigger based campaigns’—a tactic often used by retailers—to make the company’s marketing campaigns more efficient.

The hypothesis

Through years of customer research, Molson Coors has long been aware of a correlation between weather and happy hour beverage sales. Noticing that people stop for drinks when the weather is pleasant, Molson Coors has recognized an opportunity to capitalize on sales.

They had an interesting idea—that sunny weather conditions could be a trigger for higher ad engagement. So, they came up with a Facebook advertising campaign to test the impact of weather-based targeting.

The experiment

The company decided to run a Facebook campaign, pointing audiences to one of Molson Coors’ cider brands, with a call-to-action encouraging them to buy the drink at a local happy hour.

Molson Coors ran this mobile campaign to Facebook users in one Canadian market. The goal was to run targeted ads triggered by warm weather conditions, to steer more people to local happy hours. In order to test the hypothesis of this marketing campaign, Molson Coors developed weather-specific creative concepts (for instance, “Hot out there, Toronto?”). The company also created a control group campaign that lacked weather specific messaging (for instance, “What time is it? It’s Beer o’clock.”).

The results

Real-time ads switched on when local weather conditions hit 23 degrees Celsius (73 degrees Fahrenheit) or higher and sunny. Molson Coors served these ads to specific demographic groups in cities like Winnipeg, Vancouver, Toronto, and Halifax. The end result? Weather-specific ads outperformed the control ads, with an 89% higher click through rate and a 33% higher rate for post comments.

The takeaway

Thanks to technology, the lines between targeting channels are blurring. Molson Coors was able to use in-the-moment, real-time data in a very creative way. Testing new ways to target your audience can lead to valuable information about your customers.

3) OkMyOutfit’s value optimization campaign

The challenge

In 2014, OKMyOutfit, a company that connects fashion stylists with consumers who need style advice, was just bringing its service to the market for the first time. At the time of launch, the company’s founder, Diana Melencio, found herself wondering how to price OkMyOutfit’s services.

The hypothesis

Melencio was worried that her pricing model, a flat fee of $89, was too high for her audience. She hypothesized that a subscription model, at a $3.99 monthly price point, would spark more interest from her audience.

The experiment

Melencio and her co-founder ran a Facebook advertising campaign at a small budget of several hundred dollars. She identified potential customers in her target demographic and sent this audience to two versions of the landing page.

The result

One pricing model was definitely more successful than the other. “We found that around the same number of people clicked on both ads, but that only those in the $3.99 group were adventurous enough to complete the service request of our unknown service—because it was a nominal enough amount,” said Melencio in an interview with WPCurve.

Melencio learned that her audience might prefer a subscription pricing model, which led her to several other pricing tests. What she discovered in the end, though, was that OkMyOutfit needed to focus on showcasing the value of their service. “It was an issue of our translating the value of our virtual service—a top fashion stylist—in a time when the majority of people are used to getting things free online,” Melencio said.

The takeaway

Sometimes when you’re testing one thing, you’ll collect data that points to other areas of your marketing that need attention. Let your experiment surprise you.

Insights don’t have to cost a ton

You don’t need a huge budget to build the ‘perfect’ marketing campaign. Focus on a specific question and work to find the answer that works for your company. In this way, some of your most valuable marketing campaigns will be testbeds for big ideas, problem-solving tools, and mechanisms to listen to your customers.

Be Absolutely Engaging.™

Sign up for regular updates from Braze.