Why Message Testing Matters When It Comes to Campaign Performance

Published on December 15, 2020/Last edited on December 15, 2020/4 min read

Adam Swiderski

WriterWhen it comes to what makes for successful messaging, there are a lot of variables. Brands that hope to make an impression need to reach consumers at the right time, with the right wording, and through the right channels in order to stand out in overflowing inboxes and notification queues. At times, it might feel like the most important item in a promotional toolbox would be a crystal ball, since it’s so crucialto understanding consumer preferences and behaviors.

Fortunately, there’s a real-world solution that requires a lot less arcane effort: Testing. Thanks to platforms like Braze, it’s possible to, in a manner of speaking, try before you buy, giving you a picture of the types of messaging that are most going to resonate with your audience… and even to get down into the kind of fine detail that might seem nit-picky, but which can make a huge difference in performance indicators like email open rates.

It’s not quite prophecy, but with apologies to Nostradamus, it’s potentially even more effective in making sure a messaging campaign is successful. Let’s dive into how testing can make the difference.

Divide and Divine

At root, message testing isn’t much more complicated than it sounds: It’s the process of trying out different variations of messaging to determine which is more effective. The two main types of testing generally put to use are the A/B and multivariate varieties. The first is more or less self-explanatory: You have messages that differ by one variable, such as the subject line of an e-mail or a particular aesthetic choice, and you want to see which works better. Multivariate testing is more complex, involving more than one variable. Say, for example, you have two different subject lines and a message that includes emojis vs. one that doesn’t. Mixing and matching just those two details creates four variations on the message, and multivariate testing allows you to find out which will have the most impact.

Combined with properly applied segmentation, this type of testing can be a powerful asset in making sure consumers are receiving messages to which they’re likely to respond. Sending variant messages to a portion of your audience and gathering data on how they’re received allows you to formulate a more effective strategy when rolling out full campaigns at scale. What’s more, since testing can be done constantly, it’s possible to dynamically respond to changes in consumer preferences and behavior over time, making for messaging that is always primed for peak effectiveness.

BlaBlaCar Aces the Test(ing)

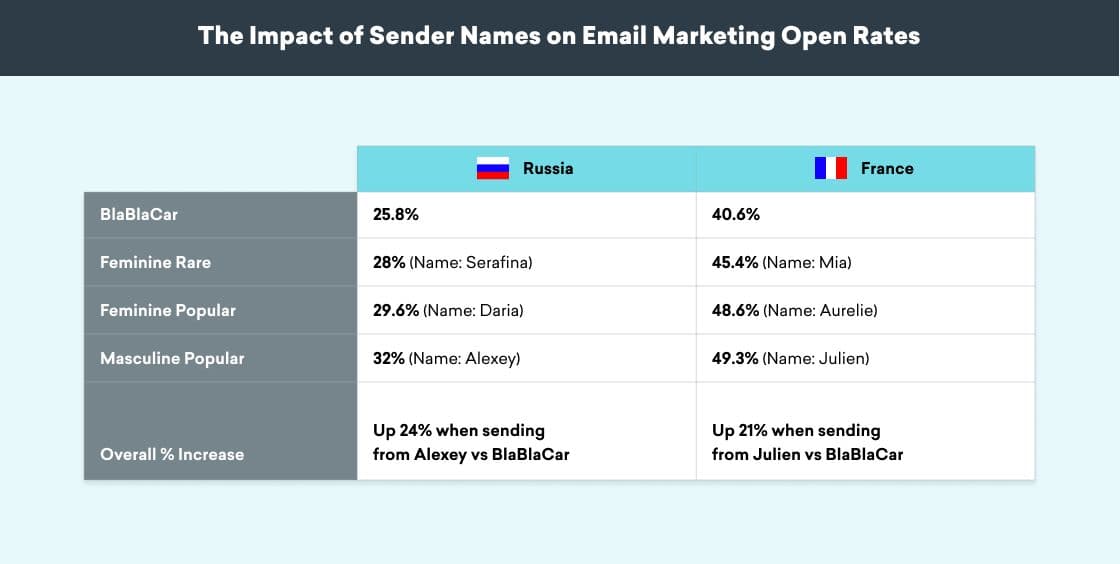

Let’s look at a real-world example that demonstrates just how important the finer details of messaging can be, and how testing can make a difference in ensuring they’re just right. French carpooling service BlaBlaCar serves some 65 million registered drivers and passengers across 22 countries, who use the company’s app and web site to plot shared rides. In an effort to maximize its email open rates, it engaged in a message testing campaign based on a variable many might overlook when it comes to email: The name of the sender. BlaBlaCar’s hypothesis was that not only would using an individual’s name be more effective than the name of the company, but that certain names would be more successful than others.

To prove it out, they launched an A/B testing campaign in both their home country and Russia to determine whether an email with a first-person sender name would perform better than an email from BlaBlaCar. To dig even deeper, they varied the names in question to test whether names that were masculine or feminine and common or uncommon would change anything about their open rates.

The results were striking, with the test campaign revealing that use of a first-person name, rather than “BlablaCar,” in the sender field resulted in 20% higher open rates, in general. When the type of name used was taken into account, the results were even more striking, with 24% higher open rates in Russia and 21% higher open rates in France when a common masculine name was used. In the end, BlaBlaCar’s message testing resulted in immediately actionable tactics the company could implement to significantly improve its promotional performance.

Final Thoughts

There are so many factors that go into whether and how a consumer interacts with a marketing message, and predicting which will make the difference can seem like an impossible task. With smart message testing, however, savvy brands can turn reading these seemingly indecipherable tea leaves into a science that yields tactical critical data around which tactics and strategy can be formed.

Related Tags

Be Absolutely Engaging.™

Sign up for regular updates from Braze.