How to Calculate the Revenue Your Emails are Generating

Published on July 18, 2019/Last edited on July 18, 2019/10 min read

Michelle Huang

Email and Messaging Lead at CanvaSo you’ve got an email program. Maybe it’s working for you—engaging your customers, building relationships, and supporting your business goals. Maybe it isn’t. Without an effective measurement plan in place, you can find yourself wandering in the dark, armed with little more than the hope that you’re on the right track.

Quantifying the effect that your email comms have can be key to solving your biggest internal blockers and make it possible to take your strategy to a whole new level—because it’s true that “you can’t improve what you can’t measure.” In my time at Canva, I’ve discovered that measuring and quantifying the impact of our email marketing comms has really helped me on my journey in communicating to key stakeholders, solving roadblocks, strategizing and getting the resources we need.

Given that, I wanted to share some key insights I’ve learned that should help you better assess how your email program is functioning and to optimize the campaigns you send.

Why should you measure the impact of your email program?

In short, having a data-informed perspective can empower you to:

- Put tangible numbers against your email marketing comms

- Grow your marketing budget

- Get your internal requests prioritized

- Bolster respect for email as a channel and your work

- Align your areas of focus with the ones that drive the most impact

- Identify and address your lowest-performing campaigns

- Guide investment in large customer engagement projects

- And report on your success as a team.

To dig a little deeper into this part of the equation, check out my earlier article on the benefits of measuring your email marketing program.

What results can you expect from measuring your email program?

Every company (and every email marketing program, for that matter) is a little different. But there are benefits that come with effective measurement of those programs that you can expect to see across the board. They include:

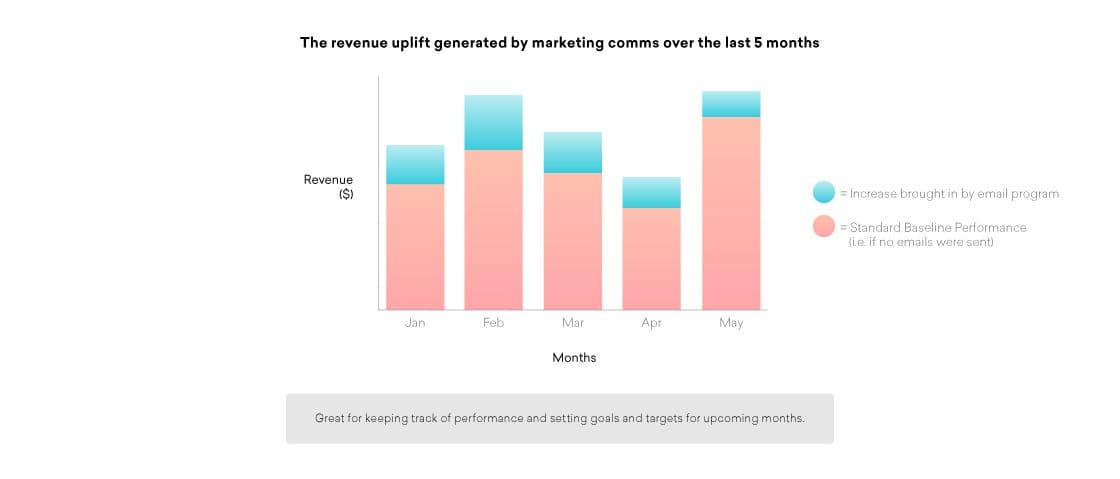

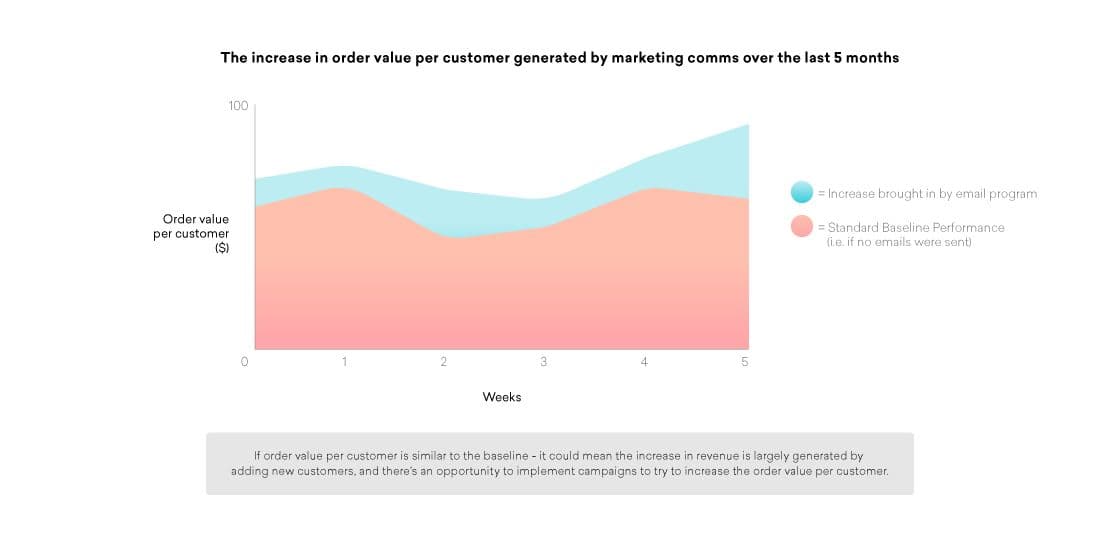

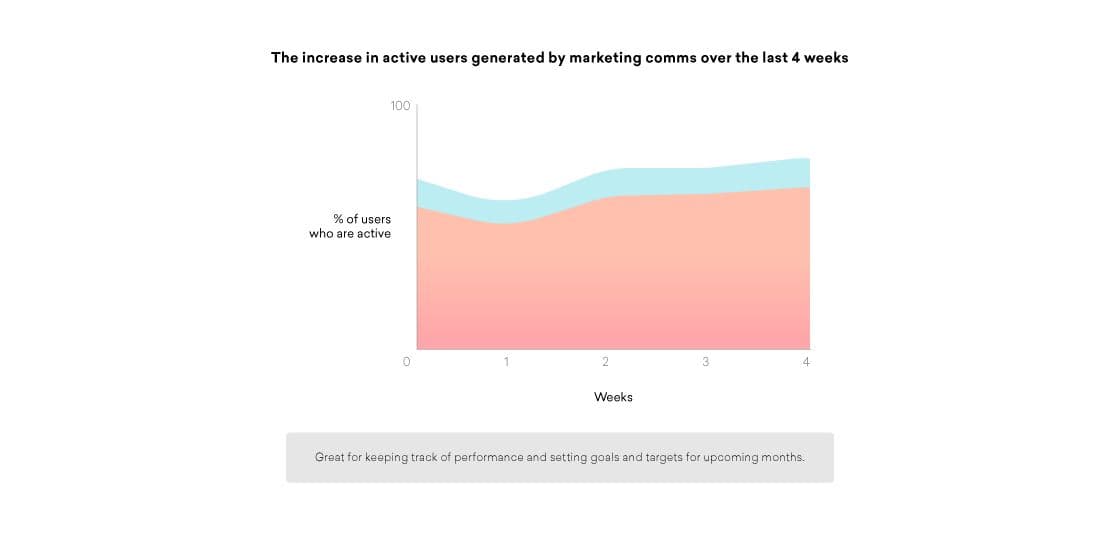

- A comprehensive, bird’s-eye view of the overall impact of all your email campaigns

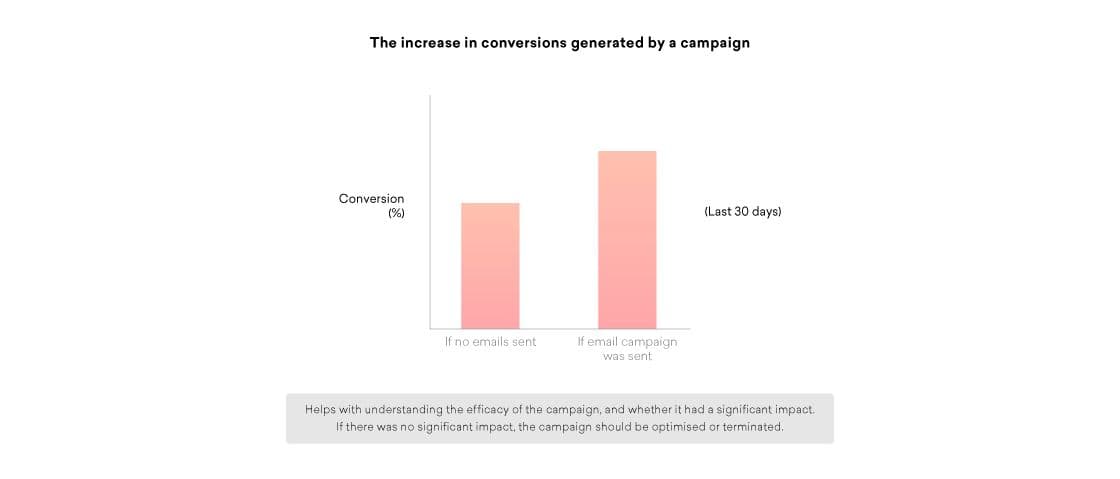

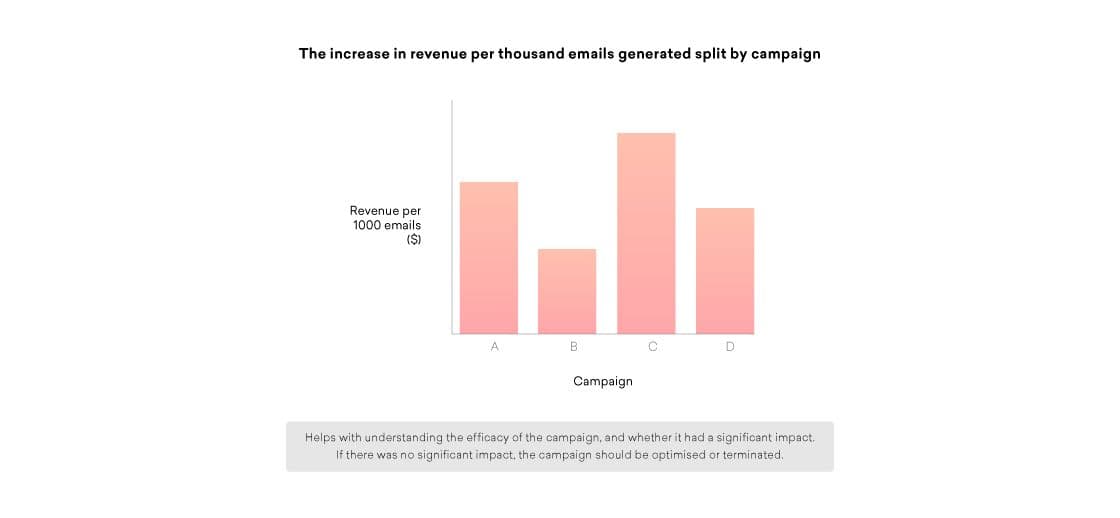

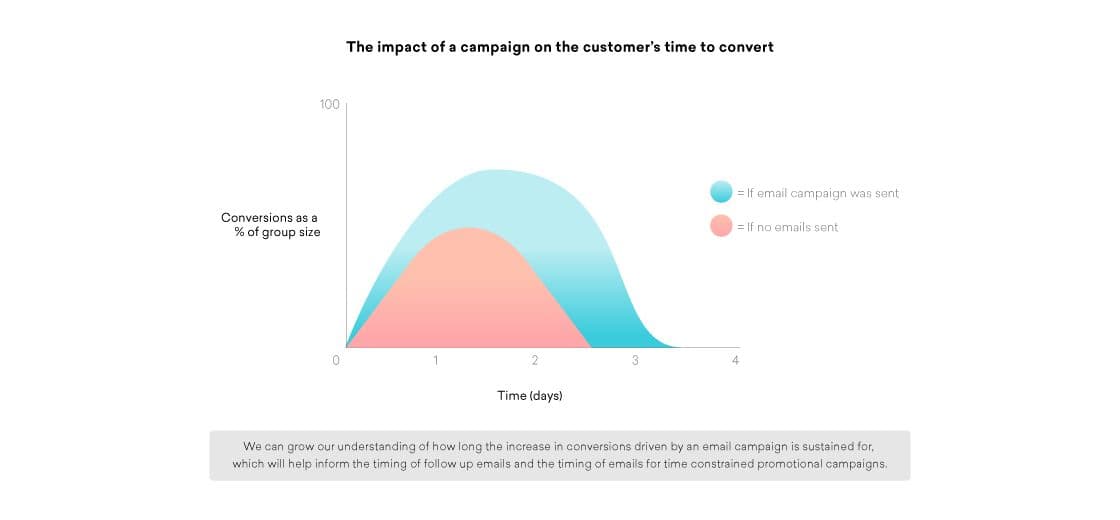

- The ability to break down and analyze the effectiveness of each campaign, making it easier to ensure optimal iteration of that outreach.

Let’s dig a little deeper with a few examples of the kinds of data and learnings you can expect, and the value they can provide:

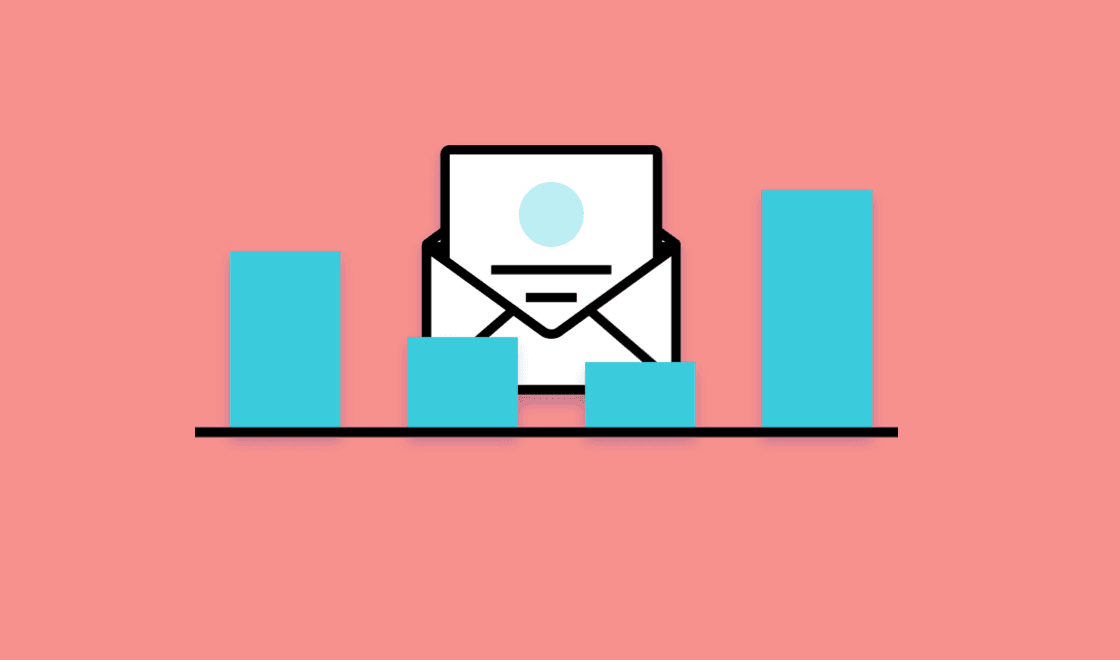

1. Understand the general impact of your marketing comms

2. Gaining a detailed understanding of each campaign’s impact

When it comes to measuring you email program, what do you need to get started?

Ultimately, what you need is a way to tell whether your email comms are having an impact on key performance indicators (KPIs) and on your bottom line. To do that, you need to split your user base into two distinct segments in order to create a global control group and a global experiment group:

The Global Control Group

This group will not receive any of your email marketing comms or campaigns, making it possible to set baseline standards for conversion rates, revenue per user, and all other key metrics that your business might care about. In a nutshell, this group’s behavior reflects what sorts of results you would see if you simply didn’t send any marketing comms.

The Global Experiment GroupThis group will receive your email marketing comms and campaigns. That means that the behavior they exhibit is influenced by the outreach you send, giving you a clear point of comparison with the results associated with your global control group. This difference in spend and activity between the two groups is what you can attribute to your collective marketing efforts.

What’s the benefit of measuring your email marketing program this way?

In general, the benefit to the approach is that it makes it possible to have a comprehensive view into whether your email marketing comms are moving the needle or not. It also makes it possible to:

Avoid over-attribution

So a customer clicked on your email and then made a purchase—that’s great! But how can you be sure that they weren’t going to buy it anyway? After all, if 15% of the customers you emailed converted and made a purchase, it doesn’t mean all of those orders were caused by the email; for all you know, 14.5% might have made a purchase anyway.

Because you’ve got your global control group, you can see what percentage of your users would have made a purchase sans email and use that metric to more accurately assess the impact that your marketing comms had—for instance, if 10% of control group users made a purchase last week, but 15% of users who received the email made a purchase, you can be confident that the 50% increase in conversions was associated with your message. By accurately assessing what’s working, you can focus your attention and effort more effectively on the places that will drive the most impact!

Escape bias in comparisons

It’s natural for marketers to want to compare the conversions associated with users who received a given campaign versus users who haven’t. But if you assess that relationship without having established control and experiment groups, you run the risk of making a biased comparison.

Why? Remember that you can only email users who have confirmed their email addresses, opted in for your marketing comms, and have remained subscribed and engaged with your emails. (Assuming, of course, that you’re following email best practices and cleaning your list in accordance with your email sunset policy.) All of that means that these users will tend to have higher conversions than users who you can’t email because they haven’t confirmed their emails, entered invalid email addresses, unsubscribed, etc.

Understand the value of your email program as a whole

A great email can have a great impact—but a lot of the time, it’s not one email that converts a user, but rather a whole series of campaigns that help to persuade them over time.

By setting up global experiment and control groups, you can more accurately assess the impact of your marketing campaigns in tandem with each other. That kind of understanding is crucial when it comes to roadmapping future projects, setting thoughtful goals, and building and scaling your marketing team.

Evaluate weekly newsletter performance and other ongoing campaigns

For certain kinds of campaigns, being able to showcase indirect attribution is especially valuable. For instance, your email newsletters may not always generate a direct click and conversion from a particular customer, but it’s still an incredibly strong branding play that can have an incremental positive impact on your marketing program. Personally, I’ve found that seeing a retailer’s name frequently in my email inbox increases how often I organically visit that retailer’s online store, make a purchase, or open the app—all without ever clicking through on the newsletter itself.

Because global experiment and control groups help you measure the impact of this “top of mind” effect” that a given campaign can generate, it can help to give a fuller picture of the value that even more branding-focused messages can provide.

How can I set up this kind of measurement program for my email marketing?

First, you need to create your global experiment and control groups. One key thing to keep in mind as you’re creating these audience segments is that the two groups need to be completely identical except for a single randomized variable. Otherwise, you run the risk of comparing two groups that aren’t actually equivalent and produce misleading findings.

Creating global experiment and control groups

For example, imagine that your brand has 1,000 users. To create a control group that represents 10% of your audience and an experiment group made up of 90% of your audience, you’d assign all users a random number between 1 and 1000 and then create a user segment made up of all users whose random number is less than 100. That segment is your control group. Next, you’d create your experiment group by establishing a segment of all users with a random number that’s greater or equal to 100.

One thing to think about as you’re creating these segments is the correct sizing of your control group. In general, you should determine the size of that group based on the volume you need to get statistically significant results on that group’s actions in a timely manner. You don’t want to have to wait months to see significance in your results—but you also want to make your control group as small as possible, since otherwise a major chunk of your audience will be missing out on your email marketing comms.

Flagging relevant control group users

In order to make effective use of your control group, it’s not enough to ensure that the campaigns you send are sent only to the global experiment group. You also need to flag the users in your control group who would have received a given campaign if they weren’t part of the control.

Why? Well, your campaign is likely being targeted to users within the global experiment group based on particular attributes or behaviors they share—which means that you aren’t sending to the entirety of the experiment group. As a result, we can’t compare that selected subset of the experiment group with the entirety of your control group and expect to get accurate insights.

Think about it like this. Your brand has a campaign it sends to users when they abandon their shopping cart, in order to remind them to complete that order. Imagine that 10% of your experiment group has abandoned a cart and therefore is eligible to receive the campaign—if you don’t flag equivalent users in the control group, you can find yourself comparing results from 10% of the experiment group with 100% of the control group. But if you flag the 10% or so of the control group who would have been eligible for the campaign if they weren’t part of the control, you can have a clearer comparison.

In the example above, if the abandoned cart email had no impact on conversions, it would still appear very successful if you failed to flag control group users. Why? Because the conversion rate of users in the control would appear extremely poor in comparison to those in the relevant experiment group segment, since 90% of the people in the control group hadn’t abandoned a cart and therefore can’t possibly convert.

What does this kind of email campaign measurement look like in practice?

Let’s take a look at a hypothetical example similar to the abandoned cart campaign we talked about above. In this case, imagine that our fictional company has one million customers—with 900,000 of them in the global experiment group and 100,000 in the global control group—and we’re trying to test the impact of an abandoned cart campaign:

Based on the results above, we can see that the campaign has had a notable impact, increasing order completion rates from 4% to 7%. That’s a 75% jump in order completions, which translates to an uplift of 2,700 order completes driven by this campaign. But if we didn’t create an experiment and a control group, we couldn’t be confident that we’d moved the needle at all.

Final Thoughts

Ultimately, taking a data-informed approach to marketing can be extremely empowering when it comes to decision making and communication. It makes it possible to better understand and communicate the impact and importance of your email program, your team’s need for resources, the rationale behind your decisions, the impact of your team’s work, and a whole lot more.

For more information and tips around measuring and growing a high-impact email and CRM program, subscribe here.

To dig a little deeper into email and what’s possible with this key channel, check out Next-Generation Email: Standing Strong In The Age Of Inbox Infinity.

Related Tags

Be Absolutely Engaging.™

Sign up for regular updates from Braze.